Image compression in SMEGv2

There’s a few technical differences between SMEG v1 and the current v2 (SMEGCPC) codebase; most of which come about from looking at the original problems in a different way and tackling them without the burden of existing code.

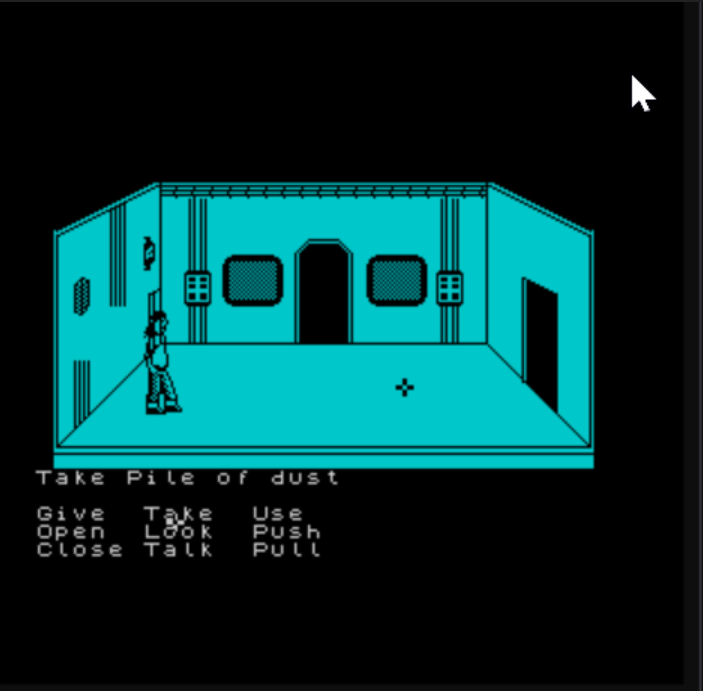

One of the “big” ones to talk about is how I am dealing with background images for the scenes. In both versions the “stage” area is 240x128 pixels (or 30x16 char cells). The stage is where the action plays out; all the characters and objects are drawn out onto a background. It’s the background that really sets the scene the adventure is playing out in.

Backgrounds in V1

In SMEGv1 the background was crafted from a tilemap. Each room had its own tilemap, - but crucially all of the rooms in the demo shared the same tileset. This was great for memory optimization; I loaded one tileset and then the only thing that changes between rooms was the tilemap itself - at 1 byte per cell that made each room’s map 480 bytes.

For the very few rooms I had in my demo, I ended up with 272 unique tiles (2,176 image bytes) - this would have increased over time as I added more content.

The problem is that I’d cheated.

The rooms all had the same “picture” and I drew that using various line and fill commands in Z80 assembly.

This approach saved a bunch of memory, but meant that the game had to always be set in the same “room”. Great for a game set in a sterile space hulk, but terrible for anything that needed variety.

It also ended up creating problems for me in that my props (tiles) needed a solution for masking out that background; it also meant that the screen “dirty” redraw code had to know how to draw that background again.

I begin looking at addressing this in v1 by making the background image part of the tileset as it really should have been in the first place. This work was never completed due to the hiatus I took from the project.

It’s hard to know how big a room’s background would have got in v1 and whether I’d have kept the single tileset, or have gone for the ability to swap them out depending on the room. This likely would have been a requirement as my “base” tileset had hit 251 tiles and I was using one byte per tile for my tilemaps. If I’d gone two byte per tile it’d mean each tilemap took 960 bytes per room.

Even in v1 I was hitting some sizing problems. The 10 rooms I had planned would likely have seen the tileset size double to ~4k with either 4,800 bytes for the rooms, or 9,600 if I was forced to double up the tile size. I was very close to needing compression of some sort, even then.

Backgrounds in V2

One of the main things I wanted to do in v2 was to allow for each scene to be more complex than the basic rooms in v1. I’m still (currently) creating them from tilemaps, but the maps themselves have multiple layers to them. The room images are generated by composing all the layers of the rooms with their masks at build time to create the final “output” image. I could also just hand paint this if needed.

This results in scenes being much more detailed, complex and have more variety (I also have foreground masking available). The challenge that comes with it is how the heck do I store this data?

The two main approaches are tile maps and “raw” images. Tilemaps are great if there’s a bunch of repeat elements but of there isn’t you’re ending up with essentially a unique tile per entry. This could work for the original game I was building, but for v2 where I want the scenes to be different, it’s a harder sell.

Background Images

This leaves us looking at ways to have a raw image that we can use as the background. The hurdle now is that the 240x128 pixel image turns into 7,680 bytes for a mode 1 CPC image; and that’s if the data is stored in non-screen format. On a 64K CPC it gives us around 6 rooms, with no other content in the game.

To solve this we have to start looking at compression routines.

Compressing one of my 7,680 byte images using ZX0 dropped the size down to an impressive 563 bytes. This is great for cold storage, but to do anything with this I’d need to unpack it to a full 7,680 buffer. This is a pretty large overhead for being able to recreate parts of the background when it gets dirty.

Image Strips

One of the techniques I read about from the original SCUMM engine was their use of “image strips”. What they do is decompose the screen into “strips” and then run-length encode each of those. The screen painting routine then draws each of the strips, decoding them on the fly.

Now, in SCUMM the images were (usually) 256 colour images, eg: 1 byte per pixel. With the 8-bits we have a more packed pixel layout to begin with - on the Spectrum it’s 8 pixels per byte; and CPC mode 1 has 4 pixels per byte. It begins to make sense to apply the concept to char cells instead of individual pixels - we almost always deal in single bytes in order to get any kind of performance in the drawing routines. So at the very least the horizontal stride becomes either 4 pixels for mode 1 - or we pick a universal 8 pixel stride for CPC and Speccy. The latter allows for a more uniform drawing api.

The next question becomes about how we define a strip. We can define a strip as:

- a horizontal scanline - 60 unencoded bytes per line, 128 lines

- a horizontal character row - 8 pixels by 8 rows, repeated horizontally per character (480 unencoded bytes per row)

- a vertical character column - 8 pixels by 8 rows, repeated vertically per character (256 unencoded bytes per column)

According to the article on the now-defunct Lucashacks! site, the SCUMM engine applies run-length encoding to vertical pixel strips as they compress to a smaller size. Presumably because there’s a greater chance of a longer run of the same colour.

Encoding Experiments

For this experiment I’ll take the same 7,680 byte image buffer that represents a simple room in the current game I’m making.

The RLE scheme I’ll use is fairly standard and matches that of SCUMM. The “run” counts are interleaved with the data and precede the actual data. If the run count has the high bit set then the data that follows has to be repeated for the count; if the run count doesn’t have the high bit set, then the number of bytes that follows are treated as unencoded, literal bytes. Each run can therefore be a maximum of 127 repeats before needing another counter byte.

This scheme is easy to encode and fast to decode on an 8-bit system.

Running the experiment involves:

- splitting the image into scanlines and applying RLE to each

- splitting the image into character cells and applying RLE to each row

- splitting the image into character cells and applying RLE to each column

| Layout | Size (bytes) | Space saved (%) |

|---|---|---|

| Vertical 8 pixel stride | 1,413 | 81.6 |

| Horizontal characters | 1,697 | 77.9 |

| Vertical 4 pixel stride | 2,129 | 72.28 |

| Horizontal scanlines | 3,252 | 57.66 |

The results are pretty impressive. The vertical character strip encoding is the clear winner here, closely followed by the horizontal character strip encoding. Encoding per-scanline resulted in the worst compression, which was pretty surprising - especially given the great compression from the horizontal character rows. I can only assume here that the data compresses well as a character stream (8x8 bytes) and so the horizontal row layout benefits from that. The vertical columnar layout likely benefits from the 127 run size, in that the best case allows us to encode an entire column in 3 bytes (if they’re all the same).

Drawing / Decoding

So what are the implications for decoding and drawing in these formats?

The horizontally encoded scanlines would draw the fastest. You’re reading and dumping the data to the screen buffer with no manipulation. This would likely be best suited for scenarios that involve chasing the beam.

The vertical stripped character drawing is relatively efficient in the way that it doesn’t involve much jumping around the screen. Just dump the pixel bytes to the screen, increase the pixel line and then repeat til the end. The major drawback here is that it’s likely to be hard to paint a screen quickly without some visible artifacts - it’s drawn top to bottom, left to right. There’s pretty much no chance of being able to beat the electron beam here.

Horizontally stripped character drawing will have a lot more backtracking on the CPC. Draw 8 pixel rows, reset the row address, move right 2 bytes, repeat for the whole line - essentially the same as a text drawing routine, but with RLE decoding happening at the same time.

Where the RLE streams suffer is when only part of the background needs to be drawn. In these cases the stream will need to be decoded in a “seek” fashion to find where we want to draw from, and then draw just enough of that data to suit.

Further compression

It’s worth looking at how well this data compresses further using ZX0. Remember that we only want the active room to be in warm memory, the rest can be bundled up in a ZX0 compressed block until needed.

| Layout | RLE Size (bytes) | RLE+ZX0 Size (bytes) | Space saved (%) |

|---|---|---|---|

| Vertical 8 pixel stride | 1,413 | 639 | 54.78 |

| Horizontal characters | 1,697 | 706 | 58.4 |

| Vertical 4 pixel stride | 2,129 | 719 | 66.23 |

| Horizontal scanlines | 3,252 | 792 | 75.65 |

Unsurprisingly the ZX0 compression does a solid job at further compressing the RLE stream; with +50% savings everywhere. The worst compressed RLE streams saw the biggest gains from the secondary compression. However the winner at straight ZX0 compression is still the original image, which at 563 is a saving of 92.7%.

Conclusions

For SMEG v2’s current art style, the best compromise for background image compression will be the vertical 8 pixel columns. It offers the best warm compression ratio and a relatively sane decoding/drawing process. It will mean that to reconstruct dirty parts of the background I’ll likely need to use a smaller back buffer before copying to the main screen as fast as I can.

This has been a fun exploration and has lead me down a few unexpected paths.

’til next time, smeggers.